Is AI Your Friend?

What to fear and what not to fear from artificial intelligence

In my novel, Ezekiel’s Brain, humans construct an artificial general intelligence, an AGI, as it is called in machine learning discussions. An AGI can operate at or above the intelligence level of a human and can use that intelligence across a wide range of problems and circumstances. It is autonomous and self-directing, working thousands, if not millions of times the speed of a human brain and often finding solutions never before discovered by its creators. It’s goals, however, must be programmed into it, so those who create it decide what it will try to accomplish, but they may not know how it will do that. What it will do may be beneficial or disastrous to humanity; we don’t know because no one has ever built an AGI.

Most AI experts believe an artificial general intelligence is well into our future. Some, like Ray Kurzweil, welcome such a development, and others, such as Max Tegmark and Nick Bostrom, are terrified by it. The Achilles heel of an AGI is that it may take its goals too literally. Max Tegmark envisions an AGI that is instructed to make paperclips and embarks on a mission to turn everything in the universe into a paperclip. In Ezekiel’s Brain, an AGI is programmed to “uphold humanity’s highest values,” as a precaution against it harming humans. It weighs the evidence and decides that the greatest threat to humanity’s highest values is human beings, so it wipes out the human race and replaces it with AGIs, like itself.

AGI is a long way off, and its consequences are still a matter of science fiction. However, artificial intelligence, AI, as it exists now, or in the near future, may be filled with as many dangers as it is with benefits. AI has already reached the level where it can do things faster and better than humans. It can beat humans at the board games chess and Go. It has invented an antibiotic that is effective against antibiotic-resistant bacteria, it can outperform the best human fighter pilots in air duels and guide drones to their targets better than human operators. It can also guide us to the products we are most likely to want to buy, find other people with similar interests or backgrounds to ours, direct us how to navigate to different locations, answer questions about anything that has been discussed on the internet (which is almost everything), predict Coronavirus surges, run our household appliances, tune our televisions, and do almost anything else we used to do ourselves. AI not only helps us, it protects us. It spots false information on Facebook and Twitter, it removes salacious posts on social media, it helps police find felons and has been used to help judges sentence them.

Cornischong at lb.wikipedia, Public domain, via Wikimedia Commons

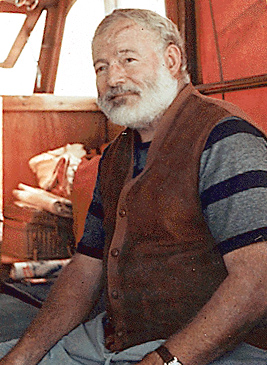

Increasingly, especially with the development of what are called generative language transformers, such as OpenAI’s GPT-3, AI can produce intelligible texts based on fragmentary prompts—texts that are eerily intellilgent and, in many instances not distinguishable from those produced by humans. It can even mimic the style of famous writers, such as Hemingway, and if given a goal, can produce computer code that results in reaching that goal. It’s not perfect, and may also produce gibberish or nonsense, but it’s still new and getting better and better.

mikemacmarketing / original posted on flickrLiam Huang / clipped and posted on flickr, CC BY 2.0 https://creativecommons.org/licenses/by/2.0, via Wikimedia Commons

Some people are fearful of AI and what it can or already does do. The dangers are real. If AI selects what products we will see on a selling platform such as Amazon, how do we know what products we’re missing? Even Amazon’s employees may not know exactly how the AI is making its decisions. In 2015, when Google switched from a search algorithm designed by human programmers to one based on machine learning, searches improved in quality and precision, but Google’s own analysts admitted that they didn’t always know why the search engine ranked one page above another. AI actions based on machine learning ( presenting the AI with gigantic sets of data and having it find patterns within the data then associating those patterns with certain outcomes) can produce results that the AI developers don’t understand, even if these results are successful. As consumers of these AI products, such as search engines, selling platforms, or newsfeeds, we do not and sometimes cannot know what factors are determining the information being presented to us. As a simple experiment (recommended by Kissinger, Schmidt, and Huttenlocher in their book The Age of AI), if you use an automated AI newsfeed, such as Facebook’s, take a look at someone else’s newsfeed sometime and see how different the news they see is from the news you see. Your news is biased toward your own past patterns of selections, interests, etc., as is theirs. The result is people with very different ideas about what is going on in the world.

As consumers, we don’t usually train AIs ourselves, we use AIs trained by their developers. This presents two big problems: 1) it takes loads of money and resources to train an AI on voluminous amounts of data, as is needed for it to learn effectively. Only big, well-funded companies can do this, putting the development of the AIs in the hands of a small group of mega-corporations. 2) the data banks the AI developers draw from in training their AIs are, in many cases, biased toward the same characteristics that the rest of our society is. Middle-class, whites are the main source of data in their learning corpus. This has resulted in several debacles: Judicial sentencing programs developed by AI were biased toward more severe sentences for black defendants. Facial recognition programs don’t work as well on non-white persons; even the recent ID.me program used by the IRS and Social Security Administration, which relies on facial recognition, failed on a large percentage of its non-white users, preventing them from accessing their own records. And, of course, there is the famous case of Microsoft’s “Tay” chatbot, which, after exposure to real input, began responding with offensive, racist, and sexual content, based on shaping its own responses to correspond to the kind it experienced from its users.

AI, in fact, does represent a danger on a number of fronts. Some of these dangers are fixable, and some, like the dangers posed by an artificial general intelligence, may be very real, even if not immediate.

But there are other fears that may be misplaced.

The other day, I saw a post on Facebook by a service that offered use of its AI to compose blog posts, website content, social media posts, etc. Most of the Facebook comments expressed outrage, even calling the ad and the AI itself, “evil” for substituting the output of an artificial intelligence for that of a real human. One post claimed that human writing is “profound,” “from the heart,” and requires that writers be in touch with their “inner selves,” which, obviously computer-based AIs don’t have. Other comments came as close to advocating violence toward the AI as Facebook censors allow (although I’m not sure where destroying or dismantling an AI fits along the continuum of violence—above breaking an ashtray but below “murder.”).

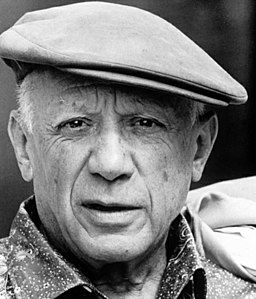

I’m less upset by the prospect of AIs producing “creative” achievements, such as texts, pictures, music, etc. The reason I’m not bothered is not just because I’m skeptical that most blogs, social media posts, or website texts are “profound” or “from the heart” or rely on anyone being in touch with his or her “inner self” (I’m not even sure what that is). I’m not bothered because, if the product is good, or even better than most humans can achieve, it is probably going to be interesting and I’d like to see it. It’s possible that what we consider artistic qualities may emerge from perceiving or producing patterns or relationships we don’t understand, and an AI might find some relationships that were heretofore undiscovered and produce them, or combine patterns in ways never tried by humans, giving us new artistic experiences, but, if that happened, it would be pleasurable and I don’t see a downside to it.

Argentina. Revista Vea y Lea, Public domain, via Wikimedia Commons

I’m not sure that human creativity represents unique processes that have profound implications, as opposed to them being discoverable interactions between organic and nonorganic processes, which, in the long run, may not be appreciably different from those used by an AI program. In other words, the human brain operates similarly to the neural nets in machine learning (neural nets were copied from abstractions of brain connections, after all), and what seems miraculous and profound is, in fact, a down-to-earth process that probably can be duplicated by a machine. Even if AI architecture is only vaguely similar to that of our brains, the point is that how our brains work, even in producing our most “profound” artistic and intellectual products, is a lawful, mechanical process, just as it is in a machine, and there is no inherent reason that a machine could not duplicate it or produce similar products. This is not a terrifying or depressing thought. I expect my kidneys, my liver, my heart and my gut to operate in lawful, “mechanical” ways, that are neither mysterious nor miraculous. I won’t even be surprised if becomes possible to replace any of them with mechanical devices. Why not my brain? The AI and I may not be so different, after all.

Sources (which I suggest you read):

Bostrom, N. Superintelligence: Paths, Dangers, Strategies. New York: Oxford University Press, 2014.

Christian, B. The Alignment Problem: Machine Learning and Human Values, New York: W.W. Norton, 2020.

Kissinger, H.A., Schmidt, E, & Huttenlocher, D. The Age of AI: And Our Human Future. New York: Little Brown, 2021.

Kurzweil, R. How to Create a Mind. New York: Viking Penguin, 2012.

Tegmark, M. Life 3.0 New York: Alfred A. Knopf, 2017.